What is Eye Tracking?

Eye tracking is the process by which eye movements are measured – typically in order to determine where (or what) someone is looking at – also known as the “point of gaze”. Eye Tracking devices such as the EyeLink 1000 Plus are used by researchers from a wide range of disciplines to gain insights into which types of information people choose to process when performing tasks. For example, psychologists interested in how we process language record the eye movements we make when reading text. Other researchers may be interested in where we look when making judgments about facial emotions. You can read about many other uses of eye tracking in research in our featured articles blogs. In addition to tracking gaze, modern eye trackers can provide other useful measures such as pupil size (for pupillometry research) and blink rate.

How Do Eye Trackers Work?

One of the biggest technological changes over the last couple of decades has been the near-universal adoption of video-based eye tracking as the technique of choice. All SR Research EyeLink systems employ video-based eye-tracking, as do most other commercially available eye trackers. So how do video-based eye trackers actually work?

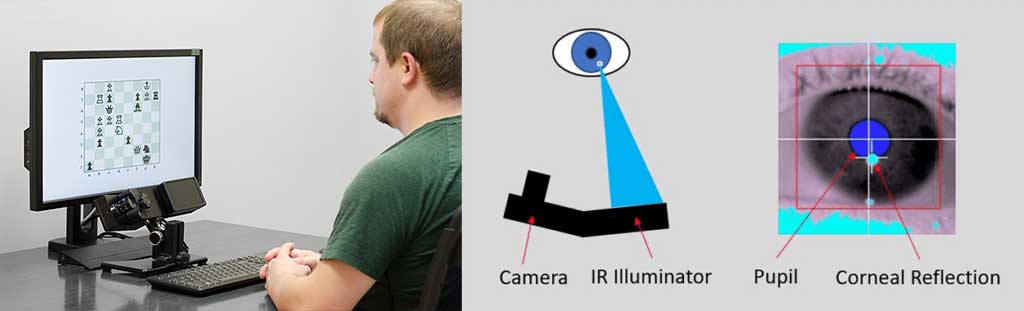

At the heart of all video-based eye tracking is a camera (or cameras) that takes a series of images of the eye. Both the EyeLink 1000 Plus and EyeLink Portable Duo use single cameras that are capable of taking up to 2000 images of both eyes every second. Within 3 ms from the image of the eye being taken, EyeLink systems work out where on the screen the participant is looking, and relay this information back to the computer controlling stimulus presentation. So how is this done? The eye-tracking software uses image processing algorithms to identify two key locations on each of the images sent by the eye-tracking camera – the center of the pupil, and the center of the corneal reflection. The corneal reflection is simply the reflection of a fixed light source (the infrared illuminator) that sits next to the camera, as illustrated below.

Pupil-Corneal Reflection (P-CR) Eye Tracking

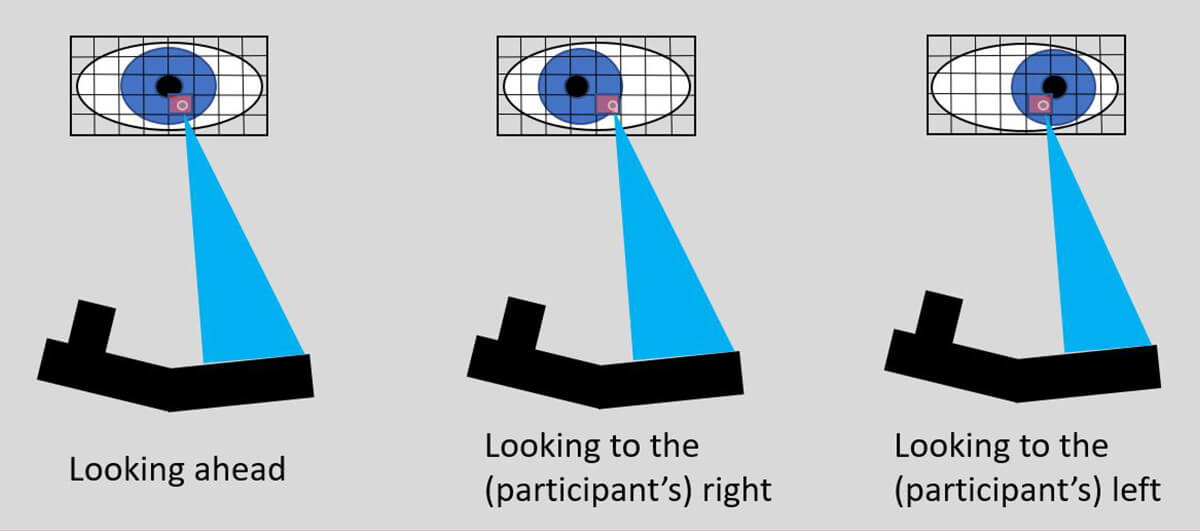

When the eye rotates, the location of the center of pupil on the camera sensor changes. However, (when the head is stabilized), the location of the corneal reflection (CR) remains relatively fixed on the camera sensor (because the source of the reflection does not move relative to the camera). The figure below illustrates what the camera sees when an eye looks ahead, then rotates to one side and then to the other side. As you can see, the center of the CR remains in roughly the same position (in terms of camera pixel co-ordinates), whereas the center of the pupil moves.

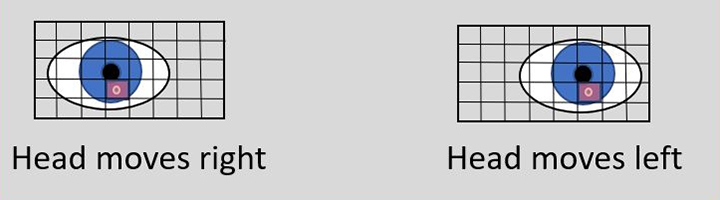

If the eye were perfectly fixed in space and simply rotated around its own center, then tracking the change in the pupil center on the camera sensor alone would be sufficient to determine where gaze is located. Indeed pupil-only tracking may still be used in some head-mounted or “glasses” based eye trackers, where the relationship between the camera and the eye remains relatively fixed, no matter how the head moves. However, for desktop or remote eye trackers, even with the head stabilized using a chin / forehead rest, small movements of the head (as opposed to the eye) are impossible to prevent, and these head movements will shift the location of the pupil on the eye-tracking camera sensor. The figure below illustrates the view of the eye from the camera when the head moves slightly from side to side.

Why the Corneal Reflection is so Important

As you can see, in this case, both the pupil *and* the corneal reflection move on the camera sensor. So how do eye trackers distinguish between changes in pupil position on the camera sensor that result from rotations of the eye from those that result from movements of the head? Critically, with head movements the relationship between the center of the pupil and the center of the corneal reflection remains the same, whereas when the eye rotates, the relationship changes. Modern video-based eye trackers exploit this difference in the Pupil-CR relationship in order to compensate for head movements. In “Pupil-CR” tracking, the change in CR position is essentially “subtracted” from the change in Pupil position, thus making it possible to disambiguate movements in the pupil center on the camera sensor that are the result of genuine rotations of the eye, from movements of the pupil center on the camera sensor that are the result of shifts in head position.

Find Out More

If you’d like to learn more about eye tracking and how it could help your research, check our Eye Tracking Solutions page and blog posts. You can search our database of over 12,000 EyeLink publications and see how people in your field are using eye-tracking data. There are also a number of helpful books:

Contact

If you have any questions about our eye trackers, or eye-tracking research in general, please don’t hesitate to contact us. We are always happy to help. You can call us (+1-613-271-8686) or click the button below to email: