Estimated reading time: 3 minutes

We have recently finished updating our database of EyeLink publications – there were more than 900 papers published in 2019 alone, and the database now contains well over 8000 publications in total. Each publication is checked individually to ensure that it contains data collected using an EyeLink eye tracker (rather than just referring to data collected with an EyeLink, as in a meta-analysis or review article) and that the research is published in a peer-reviewed journal.

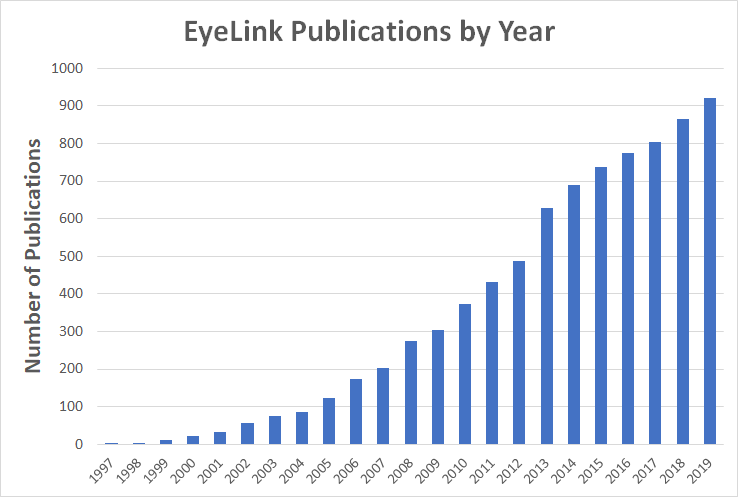

Publications by Year

In a previous blog I plotted the number of publications per year and an updated version of that plot is included below:

Highly Cited EyeLink Publications

The earlier blog also listed the “top” journals for EyeLink publications – both with respect to the number of EyeLink articles and with respect to the journal’s impact factor. This year I thought it might be interesting to list some of the most highly cited articles in our database. Determining citation counts is a somewhat inexact science. There are three main sources of information on article citation counts – Web of Science, Scopus and Google Scholar. While the advantages and disadvantages of each of these sources is a topic of lively debate (Harzing has written extensively on this – see e.g. this blog), Google Scholar has the twin advantages of having a very comprehensive coverage and being freely accessible.

The list below is a selection of 15 EyeLink articles, all of which have citation counts >500 according to Google Scholar. The list was generated by searching the top 20 journals by volume of EyeLink articles, and the top 10 journals by Impact Factor in our database. It is not intended to be exhaustive, and the articles are listed in no particular order. I think the list provides a fascinating illustration of the sheer breadth (and enormous impact) of the research that EyeLink eye trackers have been involved in.

D'souza, Joanita F.; Rich, Jessima M.; Cloherty, Shaun L.; Price, Nicholas S. C.; Hagan, Maureen A. Topographic organization of saccade-related response field properties in the marmoset posterior parietal cortex Journal Article In: eNeuro, vol. 12, no. 10, pp. 1–12, 2025. @article{Dsouza2025,Despite various histological, electrophysiological, and imaging studies, the topographic organization of saccade-related activity in the posterior parietal cortex (PPC) has been notoriously difficult to characterize. In part, this is because areas of interest in PPC are often embedded deep in sulci in macaques and humans. Understanding the extent of topographic organization in PPC can provide insights into the computation contributions of PPC. The lissencephalic cortex of the common marmoset offers a unique opportunity to investigate fine-scale topographic organization in PPC. Recordings were obtained from the PPC of two male marmosets performing a visually guided center-out saccade task with 8 or 36 peripheral targets using multichannel electrode arrays with 100 μm spacing. By plotting the pattern of saccade direction tuning preferences across all penetrations and cortical depths, we uncovered topographic organizational features within the PPC. Like other primates, multiunits in marmoset PPC tend to prefer saccade targets in the contralateral visual field. The results detail how preference for saccadic direction changes in a systematic manner across cortical distance, such that response units closer in proximity tend to show systematic changes in their tuning preferences. Across cortical distance, the visual field was also systematically encoded but reversals in direction varied across penetrations. The analysis highlights the likelihood of multiple representations of the visual field for saccade direction preference across PPC. These novel findings suggest a possible functional organization of saccade-related activity in marmoset PPC, giving insights into the computational capacity of the PPC. |

Farrell, Julia; Conte, Stefania; Barry-Anwar, Ryan; Scott, Lisa S. Face race and sex impact visual fixation strategies for upright and inverted faces in 3- to 6-year-old children Journal Article In: Developmental Psychobiology, vol. 65, no. 2, pp. 1–15, 2023. @article{Farrell2023,Everyday face experience tends to be biased, such that infants and young children interact more often with own-race and female faces leading to differential processing of faces within these groups relative to others. In the present study, visual fixation strategies were recorded using eye tracking to determine the extent to which face race and sex/gender impact a key index of face processing in 3- to 6-year-old children (n = 47). Children viewed male and female upright and inverted White and Asian faces while visual fixations were recorded. Face orientation was found to have robust effects on children's visual fixations, such that children exhibited shorter first fixation and average fixation durations and a greater number of fixations for inverted compared to upright face trials. First fixations to the eye region were also greater for upright compared to inverted faces. Fewer fixations and longer duration fixations were found for trials with male compared to female faces and for upright compared to inverted unfamiliar-race faces, but not familiar-race faces. These findings demonstrate evidence of differential fixation strategies toward different types of faces in 3- to 6-year-old chil- dren, illustrating the importance of experience in the development of visual attention to faces. |

Maith, Oliver; Baladron, Javier; Einhäuser, Wolfgang; Hamker, Fred H. Exploration behavior after reversals is predicted by STN-GPe synaptic plasticity in a basal ganglia model Journal Article In: iScience, vol. 26, no. 5, pp. 1–23, 2023. @article{Maith2023,Humans can quickly adapt their behavior to changes in the environment. Classical reversal learning tasks mainly measure how well participants can disengage from a previously successful behavior but not how alternative responses are explored. Here, we propose a novel 5-choice reversal learning task with alternating position-reward contingencies to study exploration behavior after a reversal. We compare human exploratory saccade behavior with a prediction obtained from a neuro-computational model of the basal ganglia. A new synaptic plasticity rule for learning the connectivity between the subthalamic nucleus (STN) and external globus pallidus (GPe) results in exploration biases to previously rewarded positions. The model simulations and human data both show that during experimental experience exploration becomes limited to only those positions that have been rewarded in the past. Our study demonstrates how quite complex behavior may result from a simple sub-circuit within the basal ganglia pathways. |

Barretto-García, Miguel; Hollander, Gilles; Grueschow, Marcus; Polanía, Rafael; Woodford, Michael; Ruff, Christian C. Individual risk attitudes arise from noise in neurocognitive magnitude representations Journal Article In: Nature Human Behaviour, vol. 7, no. 9, pp. 1551–1567, 2023. @article{BarrettoGarcia2023,Humans are generally risk averse, preferring smaller certain over larger uncertain outcomes. Economic theories usually explain this by assuming concave utility functions. Here, we provide evidence that risk aversion can also arise from relative underestimation of larger monetary payoffs, a perceptual bias rooted in the noisy logarithmic coding of numerical magnitudes. We confirmed this with psychophysics and functional magnetic resonance imaging, by measuring behavioural and neural acuity of magnitude representations during a magnitude perception task and relating these measures to risk attitudes during separate risky financial decisions. Computational modelling indicated that participants use similar mental magnitude representations in both tasks, with correlated precision across perceptual and risky choices. Participants with more precise magnitude representations in parietal cortex showed less variable behaviour and less risk aversion. Our results highlight that at least some individual characteristics of economic behaviour can reflect capacity limitations in perceptual processing rather than processes that assign subjective values to monetary outcomes. |

Meirhaeghe, Nicolas; Sohn, Hansem; Jazayeri, Mehrdad A precise and adaptive neural mechanism for predictive temporal processing in the frontal cortex Journal Article In: Neuron, vol. 109, no. 18, pp. 2995–3011.e5, 2021. @article{Meirhaeghe2021,The theory of predictive processing posits that the brain computes expectations to process information predictively. Empirical evidence in support of this theory, however, is scarce and largely limited to sensory areas. Here, we report a precise and adaptive mechanism in the frontal cortex of non-human primates consistent with predictive processing of temporal events. We found that the speed of neural dynamics is precisely adjusted according to the average time of an expected stimulus. This speed adjustment, in turn, enables neurons to encode stimuli in terms of deviations from expectation. This lawful relationship was evident across multiple experiments and held true during learning: when temporal statistics underwent covert changes, neural responses underwent predictable changes that reflected the new mean. Together, these results highlight a precise mathematical relationship between temporal statistics in the environment and neural activity in the frontal cortex that may serve as a mechanism for predictive temporal processing. |

Kozak, Anna; Wieteska, Michał; Ninghetto, Marco; Szulborski, Kamil; Gałecki, Tomasz; Szaflik, Jacek; Burnat, Kalina Motion-based acuity task: Full visual field measurement of shape and motion perception Journal Article In: Translational Vision Science & Technology, vol. 10, no. 1, pp. 9, 2021. @article{Kozak2021,Purpose: Damage of retinal representation of the visual field affects its local features and the spared, unaffected parts. Measurements of visual deficiencies in ophthalmological patients are separated for central (shape) or peripheral (motion and space perception) properties, and acuity tasks rely on stationary stimuli. We explored the benefit of measuring shape and motion perception simultaneously using a new motion-based acuity task. Methods: Eight healthy control subjects, three patients with retinitis pigmentosa (RP; tunnel vision), and 2 patients with Stargardt disease (STGD) juvenile macular degeneration were included. To model the peripheral loss, we narrowed the visual field in controls to 10 degrees. Negative and positive contrast of motion signals were tested in random-dot kinematograms (RDKs), where shapes were separated from the background by the motion of dots based on coherence, direction, or velocity. The task was to distinguish a circle from an ellipse. The difficulty of the task increased as ellipse became more circular until reaching the acuity limit. Results: High velocity, negative contrast was more difficult for all, and for patients with STGD, it was too difficult to participate. A slower velocity improved acuity for all participants. Conclusions: Proposed acuity testing not only allows for the full assessment of vision but also advances the capability of standard testing with the potential to detect spare visual functions. Translational Relevance: The motion-based acuity task might be a practical tool for assessing vision loss and revealing undetected, undamaged, or strengthened properties of the injured visual system by standard testing, as suggested here for two patients with STGD and three patients with RP. |

Giesel, Martin; Yakovleva, Alexandra; Bloj, Marina; Wade, Alex R.; Norcia, Anthony M.; Harris, Julie M. Relative contributions to vergence eye movements of two binocular cues for motion-in-depth Journal Article In: Scientific Reports, vol. 9, pp. 17412, 2019. @article{Giesel2019,When we track an object moving in depth, our eyes rotate in opposite directions. This type of “disjunctive” eye movement is called horizontal vergence. The sensory control signals for vergence arise from multiple visual cues, two of which, changing binocular disparity (CD) and inter-ocular velocity differences (IOVD), are specifically binocular. While it is well known that the CD cue triggers horizontal vergence eye movements, the role of the IOVD cue has only recently been explored. To better understand the relative contribution of CD and IOVD cues in driving horizontal vergence, we recorded vergence eye movements from ten observers in response to four types of stimuli that isolated or combined the two cues to motion-in-depth, using stimulus conditions and CD/IOVD stimuli typical of behavioural motion-in-depth experiments. An analysis of the slopes of the vergence traces and the consistency of the directions of vergence and stimulus movements showed that under our conditions IOVD cues provided very little input to vergence mechanisms. The eye movements that did occur coinciding with the presentation of IOVD stimuli were likely not a response to stimulus motion, but a phoria initiated by the absence of a disparity signal. |

Costela, Francisco M.; McCamy, Michael B.; Coffelt, Mary; Otero-Millan, Jorge; Macknik, Stephen L.; Martinez-Conde, Susana Changes in visibility as a function of spatial frequency and microsaccade occurrence Journal Article In: European Journal of Neuroscience, vol. 45, no. 3, pp. 433–439, 2017. @article{Costela2017,Fixational eye movements (FEM), including microsaccades, drift, and tremor, shift our eye position during ocular fixation, producing retinal motion that is thought to help visibility by counteracting neural adaptation to unchanging stimulation. Yet, how each FEM type influences this process is still debated. Recent studies found little to no relationship between microsaccades and visual perception of spatial frequencies (SF), and concluded that any effects microsaccades may have on vision do not extend to the SF domain. However, these conclusions were based on coarse analyses that make it hard to appreciate the actual effects of microsaccades on target visibility as a function of SF. Thus, how microsaccades contribute to the visibility of stimuli of different SFs remains unclear. Here we asked how the visibility of targets of various SFs changed over time, in relationship with concurrent microsaccade production. Participants continuously reported on changes in target visibility, allowing us to time-lock ongoing changes in microsaccade parameters to perceptual transitions in visibility. Microsaccades restored/increased the visibility of low SF targets more efficiently than that of high SF targets. Yet, microsaccade rates rose before periods of increased visibility, and dropped before periods of diminished visibility, suggesting that microsaccades boosted target visibility across a wide range of SFs. Our data also indicate that visual stimuli fade/become harder to see less often in the presence of microsaccades. In addition, larger microsaccades restored/increased target visibility more effectively than smaller microsaccades. These combined results support the proposal that microsaccades enhance visibility across a broad variety of SFs. |

Azarian, Bobby; Esser, Elizabeth G.; Peterson, Matthew S. Watch out! Directional threat-related postures cue attention and the eyes Journal Article In: Cognition and Emotion, vol. 30, no. 3, pp. 561–569, 2016. @article{Azarian2016a,Previous work indicates that threatening facial expressions with averted eye gaze can act as a signal of imminent danger, enhancing attentional orienting in the gazed-at direction. However, this threat-related gaze-cueing effect is only present in individuals reporting high levels of anxiety. The present study used eye tracking to investigate whether additional directional social cues, such as averted angry and fearful human body postures, not only cue attention, but also the eyes. The data show that although body direction did not predict target location, anxious individuals made faster eye movements when fearful or angry postures were facing towards (congruent condition) rather than away (incongruent condition) from peripheral targets. Our results provide evidence for attentional cueing in response to threat-related directional body postures in those with anxiety. This suggests that for such individuals, attention is guided by threatening social stimuli in ways that can influence and bias eye movement behaviour. |

Chen, Lijing; Yang, Yufang Emphasizing the only character: EMPHASIS, attention and contrast Journal Article In: Cognition, vol. 136, pp. 222–227, 2015. @article{Chen2015b,In conversations, pragmatic information such as emphasis is important for identifying the speaker's/writer's intention. The present research examines the cognitive processes involved in emphasis processing. Participants read short discourses that introduced one or two character(s), with the character being emphasized or non-emphasized in subsequent texts. Eye movements showed that: (1) early processing of the emphasized word was facilitated, which may have been due to increased attention allocation, whereas (2) late integration of the emphasized character was inhibited when the discourse involved only this character. These results indicate that it is necessary to include other characters as contrastive characters to facilitate the integration of an emphasized character, and support the existence of a relationship between Emphasis and Contrast computation. Taken together, our findings indicate that both attention allocation and contrast computation are involved in emphasis processing, and support the incremental nature of sentence processing and the importance of contrast in discourse comprehension. |

Juhasz, Barbara J.; Gullick, Margaret M.; Shesler, Leah W. The effects of age-of-Aacquisition on ambiguity resolution: Evidence from eye movements Journal Article In: Journal of Eye Movement Research, vol. 4, no. 1, pp. 1–14, 2011. @article{Juhasz2011a,Words that are rated as acquired earlier in life receive shorter fixation durations than later acquired words, even when word frequency is adequately controlled (Juhasz & Rayner, 2003; 2006). Some theories posit that age-of-acquisition (AoA) affects the semantic representation of words (e.g., Steyvers & Tenenbaum, 2005), while others suggest that AoA should have an influence at multiple levels in the mental lexicon (e.g. Ellis & Lambon Ralph, 2000). In past studies, early and late AoA words have differed from each other in orthography, phonology, and meaning, making it difficult to localize the influence of AoA. Two experiments are reported which examined the locus of AoA effects in reading. Both experiments used balanced ambiguous words which have two equally-frequent meanings acquired at different times (e.g. pot, tick). In Experiment 1, sentence context supporting either the early- or late-acquired meaning was presented prior to the ambiguous word; in Experiment 2, disambiguating context was presented after the ambiguous word. When prior context disambiguated the ambiguous word, meaning AoA influenced the processing of the target word. However, when disambiguating sentence context followed the ambiguous word, meaning frequency was the more important variable and no effect of meaning AoA was observed. These results, when combined with the past results of Juhasz and Rayner (2003; 2006) suggest that AoA influences access to multiple levels of representation in the mental lexicon. The results also have implications for theories of lexical ambiguity resolution, as they suggest that variables other than meaning frequency and context can influence resolution of noun-noun ambiguities. |

Huestegge, Lynn; Skottke, Eva Maria; Anders, Sina; Müsseler, Jochen; Debus, Günter The development of hazard perception: Dissociation of visual orientation and hazard processing Journal Article In: Transportation Research Part F: Traffic Psychology and Behaviour, vol. 13, no. 1, pp. 1–8, 2010. @article{Huestegge2010e,Eye movements are a key behavior for visual information processing in traffic situations and for vehicle control. Previous research showed that effective ways of eye guidance are related to better hazard perception skills. Furthermore, hazard perception is reported to be faster for experienced drivers as compared to novice drivers. However, little is known whether this difference can be attributed to the development of visual orientation, or hazard processing. In the present study, we compared eye movements of 20 inexperienced and 20 experienced drivers in a hazard perception task. We separately measured (a) the interval between the onset of a static hazard scene and the first fixation on a potential hazard, and (b) the interval between the first fixation on a potential hazard and the final response. While overall RT was faster for experienced compared to inexperienced drivers, the scanning patterns revealed that this difference was due to faster processing after the initial fixation on the hazard, whereas scene scanning times until the initial fixation on the hazard did not differ between groups. © 2009 Elsevier Ltd. All rights reserved. |

McMullen, Patricia A.; MacSween, Lesley E.; Collin, Charles A. Behavioral effects of visual field location on processing motion- and luminance-defined form Journal Article In: Journal of Vision, vol. 9, no. 6, pp. 1–11, 2009. @article{McMullen2009,Traditional theories posit a ventral cortical visual pathway subserving object recognition regardless of the information defining the contour. However, functional magnetic resonance imaging (fMRI) studies have shown dorsal cortical activity during visual processing of static luminance-defined (SL) and motion-defined form (MDF). It is unknown if this activity is supported behaviorally, or if it depends on central or peripheral vision. The present study compared behavioral performance with two types of MDF [one without translational motion (MDF) and another with (TM)] and SL shapes in a shape matching task where shape pairs appeared in the upper or lower visual fields or along the horizontal meridian of central or peripheral vision. MDF matching was superior to the other contour types regardless of location in central vision. Both MDF and TM matching was superior to SL matching for presentations in peripheral vision. Importantly, there was an advantage for MDF and TM matching in the lower peripheral visual field that was not present for SL forms. These results are consistent with previous behavioral findings that show no field advantage for static form processing and a lower field advantage for motion processing. They are also suggestive of more dorsal cortical involvement in the processing of shapes defined by motion than luminance. |

Fornos, Angélica Pérez; Sommerhalder, Jörg; Rappaz, Benjamin; Pelizzone, Marco; Safran, Avinoam B. Processes involved in oculomotor adaptation to eccentric reading Journal Article In: Investigative Ophthalmology & Visual Science, vol. 47, no. 4, pp. 1439–1447, 2006. @article{Fornos2006,PURPOSE: Adaptation to eccentric viewing in subjects with a central scotoma remains poorly understood. The purpose of this study was to analyze the adaptation stages of oculomotor control to forced eccentric reading in normal subjects. METHODS: Three normal adults (25.7 +/- 3.8 years of age) were trained to read full-page texts using a restricted 10 degrees x 7 degrees viewing window stabilized at 15 degrees eccentricity (lower visual field). Gaze position was recorded throughout the training period (1 hour per day for approximately 6 weeks). RESULTS: In the first sessions, eye movements appeared inappropriate for reading, mainly consisting of reflexive vertical (foveating) saccades. In early adaptation phases, both vertical saccade count and amplitude dramatically decreased. Horizontal saccade frequency increased in the first experimental sessions, then slowly decreased after 7 to 15 sessions. Amplitude of horizontal saccades increased with training. Gradually, accurate line jumps appeared, the proportion of progressive saccades increased, and the proportion of regressive saccades decreased. At the end of the learning process, eye movements mainly consisted of horizontal progressions, line jumps, and a few horizontal regressions. CONCLUSIONS: Two main adaptation phases were distinguished: a "faster" vertical process aimed at suppressing reflexive foveation and a "slower" restructuring of the horizontal eye movement pattern. The vertical phase consisted of a rapid reduction in the number of vertical saccades and a rapid but more progressive adjustment of remaining vertical saccades. The horizontal phase involved the amplitude adjustment of horizontal saccades (mainly progressions) to the text presented and the reduction of regressions required. |

Lehtimäki, Taina M.; Reilly, Ronan G. Improving eye movement control in young readers Journal Article In: Artificial Intelligence Review, vol. 24, no. 3-4, pp. 477–488, 2005. @article{Lehtimaeki2005,The objective of our study is to design and evaluate an oculomotor reading aid for beginning readers. The aid consists of an eye-tracking device and a computer program that gives real-time feedback in the form of a game to the subject about their fixation position on words. An experimental study was conducted with 8-year-old children. We evaluated the effectiveness of the aid for each child by comparing the landing site distributions before and after playing the game. We found that the peak of the landing site distribution moved towards the optimal viewing position (OVP) for word identification after playing the game. We also determined that training had a positive effect on gaze duration, on the mean and distribution of number of fixations per word, and on the percentage of words with refixations in the majority of subjects. |

Contact

If you would like us to feature your EyeLink research, have ideas for posts, or have any questions about our hardware and software, please contact us. We are always happy to help. You can call us (+1-613-271-8686) or click the button below to email:

References & Image Credits

- Header Image by Hermann (Pixabay License)

Evidence for Implicit Theory of Mind

Evidence for Implicit Theory of Mind